Ayan Majumdar

Ph.D. Candidate in Computer Science

Max Planck Institute for Software Systems

Saarland University

Biography

I am a Ph.D. candidate and Doctoral Researcher in Computer Science at the Max Planck Institute for Software Systems and Saarland University, Germany. I am jointly advised by Prof. Isabel Valera and Prof. Krishna Gummadi.

I am passionate about bridging the gap between theoretical research in fairness, causality, and explainability and the practical deployment of safety-compliant Automated Decision-Making and Generative AI systems. Prior to my Ph.D., I completed my Master’s thesis titled “On Computing Counterfactuals for Causal Fairness” with the Networked Systems Research Group, supervised by Prof. Krishna Gummadi and Prof. Isabel Valera.

I have also been fortunate to have collaborated with several incredible mentors: Preethi Lahoti (Max Planck Institute for Informatics), Prof. Dietrich Klakow (Spoken Language Systems, Saarland University), Prof. Adrian Weller (University of Cambridge).

- AI Safety

- LLMs & VLMs

- Fairness

- Explainability

- Causality

-

Ph.D. in Computer Science, (ongoing)

Saarland University and Max Planck Institute for Software Systems, Saarbrücken, Germany

-

M.Sc. in Computer Science, 2021

Saarland University, Saarbrücken, Germany

-

B.Tech. in Electronics and Communication Engineering, 2015

Heritage Institute of Technology, Kolkata, India

Recent News

- New publication! 🎉 Our work on operationalizing disparities in non-binary treatment decisions has been accepted in the AAAI 2026 AI For Social Impact Track! Check it out here.

- Preprint alert! 🚨 My internship work analyzing the capabilities of LLMs in detecting demographic-targeted social biases is live on Arxiv! Check it out here.

- Interned at Huawei Munich Research Center’s Trustworthy Technology and Engineering Lab as a Ph.D. research intern!

Skills

5+ years experience building scalable research pipelines.

Expertise in LLM/VLM inference, prompt optimization, and safety-critical applications.

Specialized in fairness, explainability, and aligning models with compliance frameworks.

Deep experience in causality, explainability, and evaluating automated decision-making systems.

Advanced statistical analysis and visualization of large-scale real-world data.

Research and industrial experience in developing novel methods and evaluation benchmarks.

Recent Publications

Education

Graduate student in computer science with a strong focus on machine learning/artificial intelligence.

- Grade: 1.2 / 1.0 (German scale)

- Core courses: Artificial Intelligence, Information Retrieval and Data Mining, Machine Learning

- Advanced courses: Statistical Natural Language Processing, Neural Networks: Implementation and Application, High-level Computer Vision, Methods of Mathematical Analysis, Statistics with R, Human-centered Machine Learning, Machine Learning in Cybersecurity, Information Extraction

- Seminars: Machine Learning

Undergraduate student of electronics engineering.

- Grade: 8.8 / 10

- Core courses: Signals and Systems, Digital Electronic and Integrated Circuits, Analog circuits, Control System, Digital Communications, Analog Communications, Digital Signal Processing

- Elective courses: Microprocessor and Microcontrollers, Data Structures and C, Information Theory and Coding, Object Oriented Programming, Microelectronics and VLSI Design, Embedded Systems, Database Management Systems

Experience

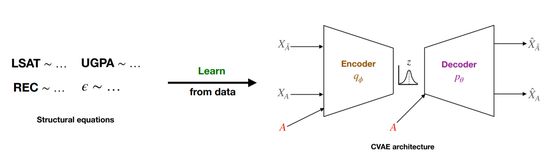

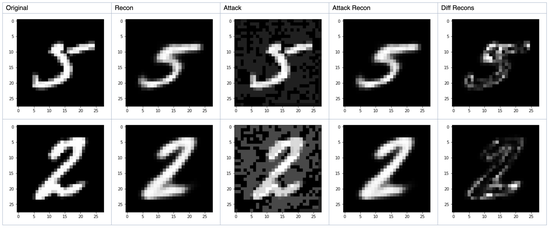

Research student working in the topics of fairness in generative models, with a particular focus on variational autoencoders.

- Worked on estimating, quantifying and mitigating bias in variational generative models.

- Additionally explored the potential of using such models in a range of applications: anomaly detection, adversarial example detection and defense, classifier calibration.

- Designed a system based on variational autoencoders to generate counterfactual data for fairness scenarios under minimal causal assumptions.

- Supervisors: Prof. Krishna Gummadi, Prof. Isabel Valera

Worked as a part of the project on Mutual Intelligibility and Surprisal in Slavic Intercomprehension.

- Data collection and cleaning through web crawling and multi-sentence alignment for multilingual NLP experiments.

- Worked on developing the web-based application of the linguistic experiment to assist in user studies.

- Supervisor: Prof. Dietrich Klakow

Worked as part of the Engineering Services Communication Products group. Responsibilities.

- Development and maintenance of the flagship Session Border Controller (SBC) for a reputed US client.

- Develop core functionalities of the system using the knowledge of SIP (Session Initiation Protocol) and VoIP (Voice over IP).

Worked on developing, optimizing and testing a novel community-based routing algorithm usingsocial metrics for message transmission in delay-tolerant networks in post-disaster scenarios.

- Supervisor: Prof. Tamaghna Acharya

Related Projects

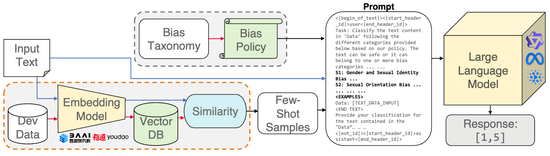

Evaluating LLMs for Demographic-Targeted Social Bias Detection: A Comprehensive Benchmark Study

We conduct a comprehensive benchmark study evaluating LLMs for demographic-targeted social bias detection in raw text data, revealing that while certain configurations show promise for scale, significant performance gaps persist across complex social categories.

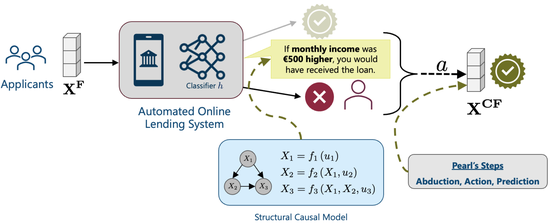

CARMA: Causal Algorithmic Recourse with (Neural) Model-based Amortization

We explore improving the practicality of providing causal recourse explanations through a novel neural network model-based automation framework.

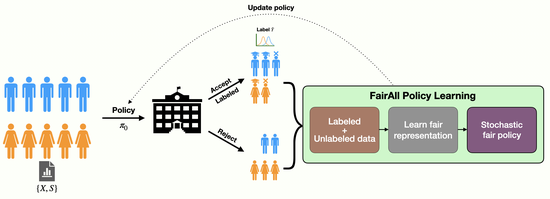

FairAll: Fair Decisions With Unlabeled Data

We explore the helpfulness of unlabeled data for fair, optimal and stable decision-making in societal settings.

Generating Counterfactuals for Causal Fairness

Project that was conducted as part of my Master’s thesis to explore the application of generative models to compute counterfactuals for fairness.

Bias in Generative Models

This project explores the case for potential bias in generative models such as variational autoencoders. The project also briefly looks at ways to mitigate such bias.

Using VAE for Robustness

Exploring potential use cases of variational autoencoders in the context of robustness of ML systems.

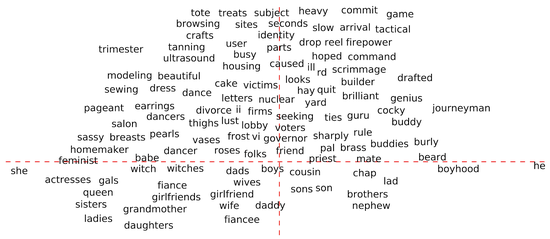

Debiasing Word Embeddings

Mini-project that looks at potential bias in pre-trained word embeddings and methods on how to remove such bias.

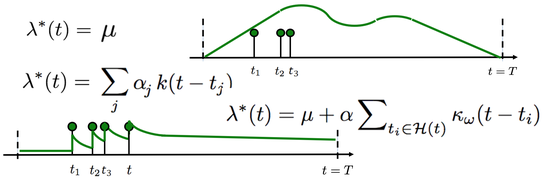

Temporal Point Processes and Smart Broadcasting

Project that explores various algorithms in temporal point processes. Also explores one potential use-case in smart broadcasting of messages.

Predicting Vulnerability of Windows Machines to Malware

This is a data-science project that aims to predict which Windows machines are more prone to malware attacks. I show the application of various different methods for the task and give a comparative analysis between them.

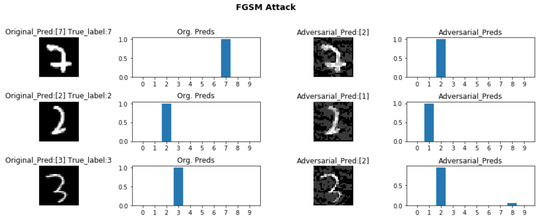

Adversarial Attacks and Defense for CNN

The project explores CNN classification models and their vulnerability to various adversarial attacks. Also explores a defence mechanism for it.

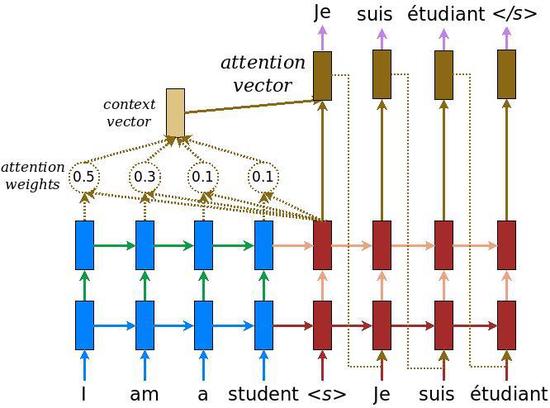

Neural Machine Translation

A mini-project that looks at the task of neural machine translation using sequential models and attention mechanism.

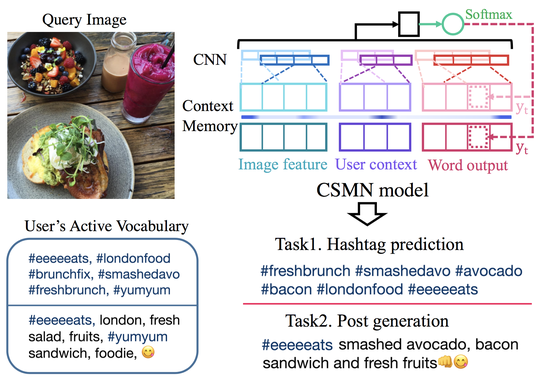

Exploring Personalized Image Captioning

This project explores personalization of generating image captions. We explore different architecture choices of Attend2u and also analyze personalization of the captions.

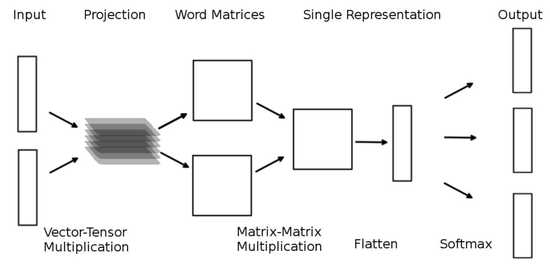

Word2Mat: A New Word Representation

This project explores a novel embedding technique for words into matrices instead of vectors. We explore this novel embedding method and how it could improve contextual sense.

Recent & Upcoming Talks

Certificates

Teaching

Awards & Achievements

- Awarded scholarship for fee waiver to attend the Nordic Probabilistic AI Summer School (ProbAI) (June 2021).

- Invited to attend Microsoft Research conference Frontiers of Machine Learning (July 2020).

- Selected for research assistantship at the Max Planck Institute for Software Systems (October 2019).

- Won Spot Award and Insta Award at Infosys Ltd. for outstanding performance and contribution to the project.